TL;DR

- LLM search works by retrieving indexed content chunks first, then generating an answer using only those retrieved sections.

- Indexing in LLM systems creates a searchable database of content chunks that the system can select from.

- Content structure determines how clearly ideas are separated before indexing. Clean structure leads to accurate chunk retrieval.

- Metadata improves retrieval precision by labeling chunks with context such as topic, region, audience, and freshness.

- Structured data is website markup that helps machines identify entities and relationships, supporting clarity and credibility but not guaranteeing citations.

What Is LLM Search and How Does It Work?

LLM search is a system in which a language model answers a question using stored content.

The language model does not crawl your website in real time. It only reads content that has already been indexed inside a searchable system.

The process typically follows these steps:

- Your content is ingested into an internal system.

- The system breaks that content into smaller sections.

- Each section is converted into a mathematical representation of meaning.

- These sections are stored inside a searchable database.

- When a user asks a question, the system retrieves the most relevant stored sections.

- The language model reads those retrieved sections and writes the final answer.

This retrieval step happens inside the LLM search system. It selects chunks from the internal index, not directly from your website pages.

If the wrong chunks are retrieved, the answer becomes inaccurate. If the right chunks are retrieved, the answer becomes reliable for the LLM model.

LLM search performance is therefore driven by selection quality.

How Indexing Happens in LLM Systems

Indexing in LLM systems means building a searchable library of your content so the LLM's retrieval engine can find it later.

Instead of storing full pages as single units, the system divides them into smaller content chunks. Each chunk should ideally contain one clear idea.

Indexing usually happens in three connected steps.

Step 1: Chunking

The system splits content into smaller units. Cleanly separated ideas improve chunk clarity.

Step 2: Embedding

Each chunk is converted into a vector representation. This allows the system to match meaning rather than just exact words.

Step 3: Storage

Embeddings, along with their metadata, are stored in a vector index. This index is what the retrieval engine searches when a question is asked.

Below is a comparison of these steps and their purpose.

.avif)

The retrieval engine inside the LLM search system becomes unreliable when:

If chunking combines multiple ideas into a single block, the system retrieves unclear fragments.

If embeddings are noisy, similarity matching becomes less precise.

If the index is incomplete or outdated, the correct chunk is never retrieved.

Indexing determines what exists inside the system. If content is not indexed properly, it cannot be selected.

How LLMs Index Websites?

Website indexing for LLM search starts with ingestion.

Ingestion means pulling content from your website into the internal indexing system.

This usually involves four stages -

Crawl and Fetch

A crawler fetches HTML pages. Sitemaps, canonical tags, and robots.txt files influence which pages are accessible for ingestion.

Extract Main Content

Navigation bars, footers, and sidebars are removed. Only the primary content area is retained for indexing.

Chunk and Embed

The extracted content is divided into chunks, converted into embeddings, and stored in the index.

Maintain Freshness

If content changes, it must be re-ingested. Updated dates and version markers help ensure retrieval selects the most current material.

Some organisations publish an llms.txt file to signal which pages are safe and useful for AI systems to ingest. Adoption is still evolving, but the goal is transparency in ingestion preferences.

A clean website structure improves extraction. Clean extraction improves indexing. Clean indexing improves retrieval accuracy inside the LLM system.

What Is Structured Content and Why Does It Matter?

Structured content refers to how information is organised on a page for human readability.

It usually includes:

- Clear heading hierarchy

- Short paragraphs

- Bullet lists

- Tables

- Separated definitions

Structured content affects the quality of chunking during indexing.

If a paragraph contains three unrelated ideas, the chunk created from it will blend them. This reduces retrieval precision.

When the structure is clean, chunk boundaries are clear. Clear boundaries improve embedding clarity. Clear embeddings improve retrieval accuracy.

Structured content improves how your information is divided before it enters the index.

What Is Metadata in LLM Search?

Metadata is descriptive information about your content.

It helps the LLM's retrieval engine filter and select the correct chunk.

Metadata can exist at two levels.

- Page-level metadata describes the entire page.

- Chunk-level metadata describes individual sections stored in the index.

A useful metadata contains:

- Topic

- Audience

- Region

- Product line

- Content type

- Last updated date

- Version number

- Source URL

Metadata also improves selection precision.

For example, if two chunks discuss pricing but apply to different regions, region metadata prevents the retrieval engine from selecting the wrong one.

Metadata does not change the content itself. It improves the accuracy with which the system selects relevant content during retrieval.

How to Structure Metadata Clearly?

Metadata should remain simple and consistent.

A clean metadata structure looks like this -

Page Level Metadata

Title

Primary topic

Primary entity, such as a product or service

Owner team

Last updated date

Version number

Chunk Level Metadata

Section topic

Intent type, such as definition or comparison

Audience type

Region

Product reference

Last reviewed date

Each metadata field should answer one specific question.

- What is this about?

- Who is it for?

- Where does it apply?

- When was it last accurate?

Too many metadata fields create confusion. Focus on clarity over complexity.

What Is Structured Data?

Structured data is machine-readable markup added to a website page.

It uses standard formats such as JSON-LD, Microdata, and RDFa to consistently describe entities and relationships.

Structured data helps machines clearly understand that a page is an article, who the publisher is, who the author is, and whether the page describes a specific product.

Structured data is different from structured content.

Structured content organises information visually for humans.

Structured data adds technical markup for machines to understand.

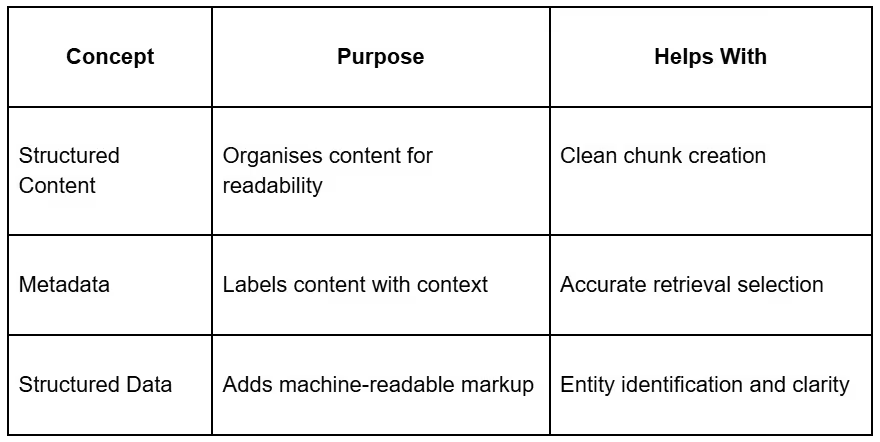

Structured Content vs Metadata vs Structured Data

These three concepts solve different problems. The table below shows how each one improves AI visibility and where it operates within the overall system -

Types of Structured Data

Most business websites benefit from a small set of schema types.

An organization defines brand identity.

A person defines authors or leadership.

The article defines blog content.

Product defines product pages.

The FAQ page defines question-and-answer sections.

HowTo defines step-based instructions.

BreadcrumbList defines the site hierarchy.

Structured data must match visible page content. Incorrect markup reduces trust.

LLM Visibility Is Earned at the Indexing Layer

LLM search performance depends on how accurately the system selects content from its index. This selection is shaped by complete indexing, clean content structure, precise metadata, and accurate structured data.

Indexing decides what exists inside the LLM system, structure defines how clearly ideas are separated, metadata guides which chunks are selected, and structured data clarifies entity identity on the website.

The organisations that win in AI search will not be those producing the most content, but those organising their knowledge systems with discipline and precision.

Do you want more traffic?

How Is AI Search Reshaping Pharma and Healthcare Marketing in India?