Quite a few AI visibility tools have reached marketing teams, promising to track your brand’s visibility and help you compete in the marketing jungle.

Be it any platform, ChatGPT, Perplexity, Claude or AI Overviews. Seldom do they dive into its fundamental stage, which, in simple terms, is: “How is asking the query?”

They run your prompts through an API. The API doesn't know which user is asking a prompt. It doesn't reflect any buying history, persona, or profession. Every prompt gets a generic answer that no one in your category would ever see.

If your dashboard shows ChatGPT mentioning your brand 14% of the time, the honest version of that number depends entirely on whether the AI thought a developer, a CMO, or a team lead was asking.

The same prompt produces three different brand recommendations. The tools already on the market calculate the average number of brand mentions and call it visibility.

We built fta.visibility to fix that gap, and a few others. Across the enterprise brands we've onboarded, the same prompt run with three different personas produces a different top-mentioned brand more often than not.

If you want to see the platform in action before booking a call, we've put together a full walkthrough here.

What most SEO teams get wrong before they even start measuring AI visibility?

Before we get into the platform, here is what we keep hearing from enterprise teams who have tried other AI visibility tools before reaching us.

The dashboards looked fine to them, but there was still an issue. Competitors were showing up in categories these teams clearly owned. Buyers were arriving for sales calls already having formed a shortlist, and the brand was not on it.

The AI had recommended someone else, and nobody had caught it because the visibility tool was showing green.

The disconnect is almost always the same thing. Generic prompts, run without persona context, return generic answers.

A prompt asking "which cybersecurity platform should I use" returns a very different set of brand recommendations depending on whether the AI assumes the question is from a 28-year-old developer at a startup or a CISO at a 5,000-person regulated enterprise.

Most tools never make that distinction. They average the two answers and call it visibility.

Across the accounts we have onboarded since launch, the same prompt, run with three different buyer personas, surfaces a different top-recommended brand in most cases.

This single insight helped us to plan every decision we made in fta.visibility, starting with personas as the foundational verification layer rather than an optional add-on.

"When we built fta.visibility, the first argument we had internally was about personas. Many tools in this space runs prompts through a clean API but the results appear questionable.

We refused to do that, because the buyer asking ChatGPT about your category is never a clean API. They are a CMO, a team lead, and a developer. The persona changes the answer. Until the tool reflects that, the data is theatre." Arun Kumar D, VP Product Engineering

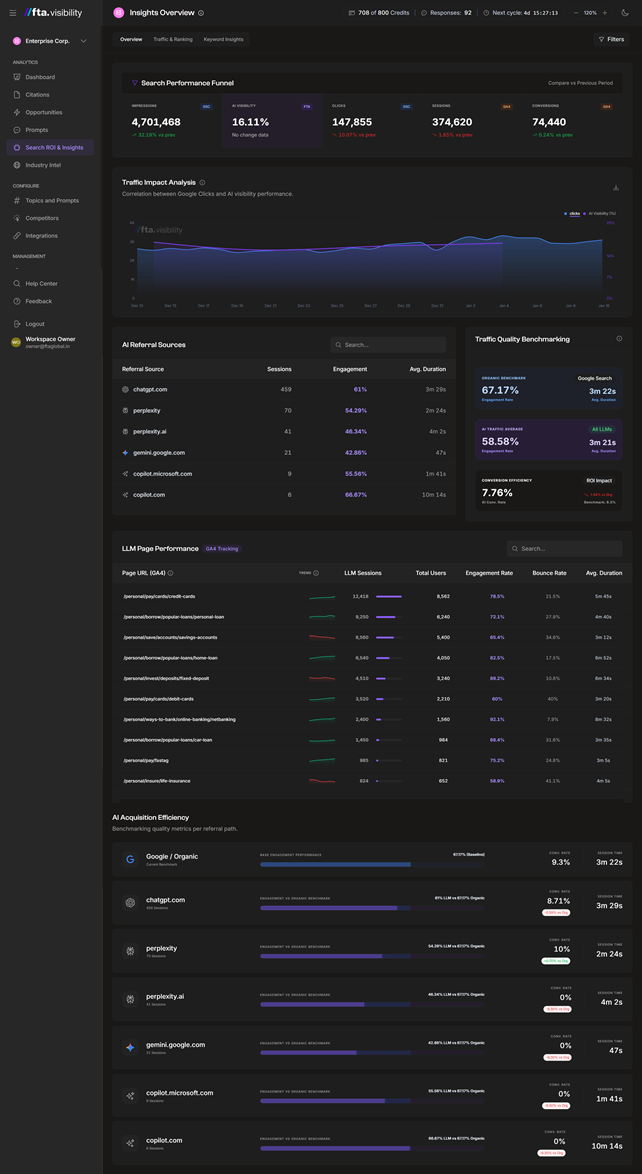

Unified Intelligence Dashboard

Here is a quick overview of the Unified Intelligence Dashboard:

- The Unified Intelligence Dashboard is the single screen that connects every layer of the funnel.

- Impressions and AI visibility sit alongside clicks, sessions, and conversions on the same timeline, so you can finally see how a shift in AI visibility translates into actual pipeline.

- AI referral sources show you which platforms are sending qualified traffic and which ones are sending visitors who bounce in 12 seconds.

- LLM page performance tells you exactly which URLs ChatGPT and Perplexity are pointing buyers towards, and what those buyers do after they land.

- The traffic quality view sits beneath it all, comparing how AI-sourced visitors engage relative to Google organic traffic.

The five things every enterprise should be tracking

If you're auditing your current AI visibility setup, these are the five things your dashboard should show you:

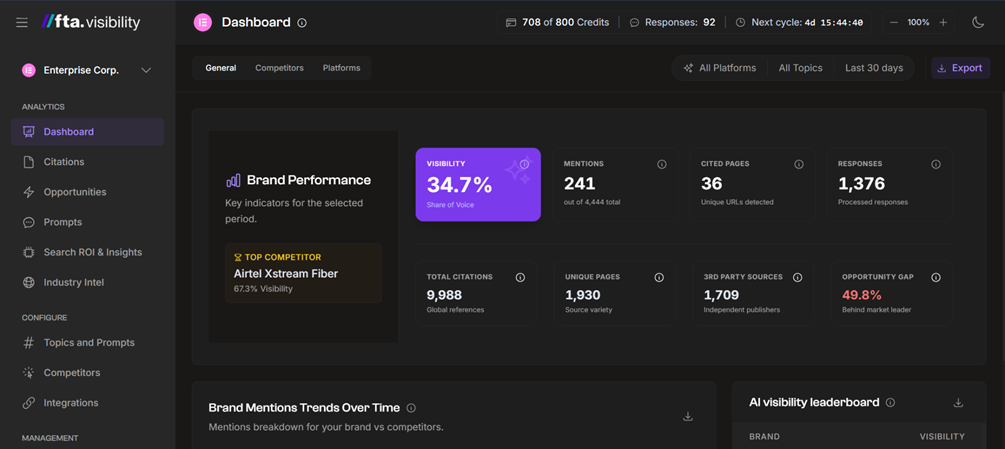

- Visibility percentage by platform & not in aggregate. ChatGPT and Perplexity disagree more often than they agree. Your brand might be mentioned twice on Perplexity for a query and only once on ChatGPT for the same query. This asymmetry is a signal. It tells you which engine is more receptive to your content right now, and where you have more work to do.

- Mentions and citations as separate metrics. A mention is when the AI names your brand in the answer text. A citation is when the AI links to a page on your domain as a source. Those are completely different events with completely different fixes.

- Persona-tagged visibility. As covered in the opening, the same query produces different brand recommendations depending on who the AI thinks is asking. If you're not running prompts through specific personas and your actual buyer profiles, your visibility data will be generic and fail to reflect reality.

- Persistence across weekly crawls. A 22% visibility score for one week is interesting. The same number across eight weekly crawls is durable. The score that bounces between 18 and 28 every week indicates the AI knows about your brand for that query, but isn't convinced enough to cite you reliably.

- Source-level intelligence. Forget keywords. The real competitive question in AI search is which third-party domains the AI engines pull from to answer your prompts. When Wikipedia, LinkedIn, Reddit, and Medium keep showing up in your category citations, that's not noise. This is a map of where AI engines go for trust signals. If you can place content on those domains, you're increasing your chances of being cited downstream.

Brand performance dashboard

Think of it as your share of voice in AI answers for the prompts you track.

Comparing a mention vs a citation

We help you understand how confusing mentions are the most expensive mistake in AI search measurement because it changes how you think about every AI visibility number you see.

A mention happens when the AI names your brand in the body of its answer. If ChatGPT says, "I recommend SBI YONO for digital banking", SBI just got a mention.

The mention came from the AI's training data, from third-party content, from sources the model already trusts.

A citation is something different. A citation is when the AI links to a specific page on your website as a source for what it just said.

The same response could mention SBI YONO without citing any SBI page, or it could cite an SBI help article without naming SBI in the answer text at all.

You can have one without the other. We see brands every week that get heavily mentioned but rarely cited, which means AI knows about them but isn't pulling content from their sites.

We also see brands that are frequently cited but rarely mentioned, meaning their content is used as a reference, yet the AI recommends someone else by name.

The fixes for these two problems look nothing alike. A mention problem is solved through entity engineering: clean Wikipedia presence, consistent brand naming across third-party sources, and mentions in trusted publications.

A citation problem is solved through on-site content engineering: better passage structure, clearer scope statements, and content that answers the prompt directly enough for the AI to lift it.

Most AI visibility tools collapse mentions and citations into one number. The number is meaningless, because the underlying problem could be either thing.

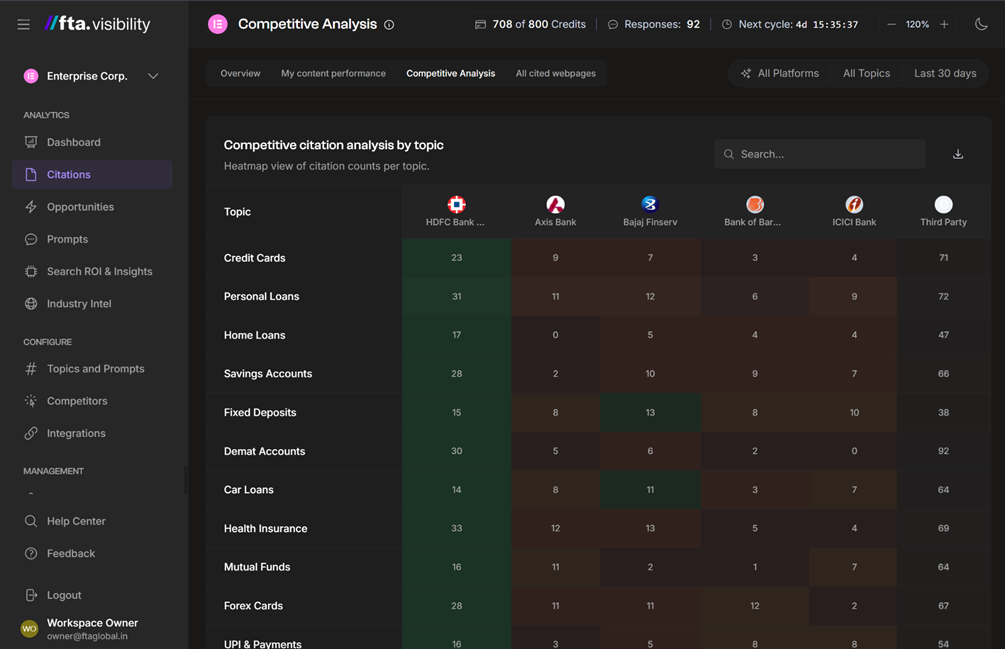

Competitive Citation Analysis (Heatmap)

The heatmap shows how citations and mentions distribute across topics and brands. At a glance, you can see where you lead, where rivals dominate.

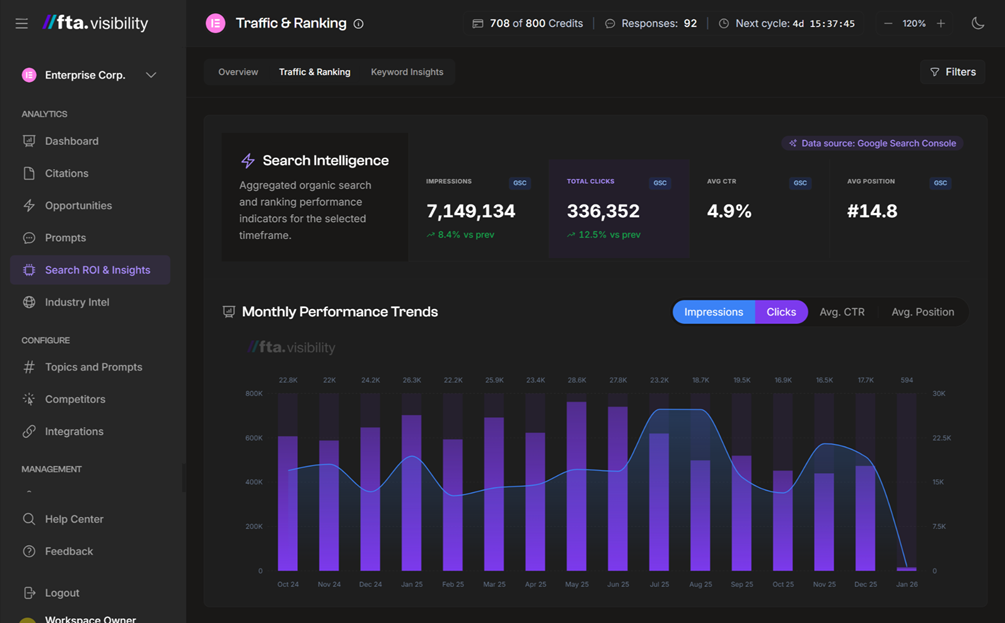

Traffic & Ranking Trends

The Traffic and Ranking Trends display integrates your Search Console metrics: impressions, clicks, average CTR, and average position.

What to do for pages that AI reads but doesn’t cite in the answer?

A source is a page that AI retrieved during a query, read, and considered as a reference, but ultimately didn't cite in the final answer.

Usually, it didn't make the cut because the page lacked sufficient on-topic content to justify the citation, or another source covered the same ground more directly.

Why does this category matter? A source is a page that's already halfway to becoming a citation.

The AI knows about it & trusts it enough to retrieve it. It just wasn't convinced by what it found.

Refresh that page with content that answers the prompt directly, and the conversion from source to citation is high on the next crawl cycle.

Across the enterprise accounts we've audited, the biggest opportunity lies in content that is retrieved but not cited.

Most teams ignore it because most tools don't show it. The pages on that list are the ones your team should refresh first.

There's a related insight in the same view. Reddit citations have grown significantly across both ChatGPT and Perplexity over the last several months.

If your category has any active Reddit conversations and you have no presence there, that's a clearer signal than any keyword research tool can give you.

Difference between SEO tools vs AI visibility tools

Here is the difference between classic SEO tools and AI visibility tools on different visibility metrics:

The gap between these two columns is where most enterprise pipelines are being lost. A brand can rank on page one of Google and still be invisible inside the AI answer the buyer actually reads.

How does fta.visibility work?

- A governed crawl cycle. You define your prompts, your competitors with their brand variations, the personas your real buyers represent, and the data sources that prove impact. The platform runs those prompts across ChatGPT and Perplexity weekly, four times a month, and parses every response for mentions, citations, exact pages cited, and competitor activity. Google Gemini, Claude, and Google AI Mode are on the roadmap.

- The persona system. Every prompt is attached to a persona that appears before the query as a system instruction. The instruction changes who the AI thinks is asking, which changes the answer, which in turn changes which brands are recommended. Three personas per account is the sweet spot for most B2B brands.

- The unified data overlay. Google Search Console, GA4, and AI visibility data sit on the same timeline. You can see how AI visibility correlates with traditional impressions and clicks, and whether AI-sourced traffic engages at a different rate than Google organic.

In most accounts we run, AI-sourced traffic engages at a higher rate than Google organic, with longer session durations. If you're trying to make the case internally for why AI visibility deserves budget, the comparison is the one to put on the slide.

How do we read the fta.visibility dashboard every week?

The whole strategy and audit takes under 30 minutes. Run through this every Monday. Keep these steps in mind:

- Check your visibility percentage. Look at the weekly trend, not just the number. A declining trend on a high score is more urgent than a stable score that looks low.

- Open the Prompts section. Find any prompt where you lost a mention this week. Read the actual AI response and see which competitor took your place.

- Go to Opportunities and sort by topic. Pick the one topic losing the most visibility. Make it the week's content focus.

- Open the Sources section and filter for your own domain. Those are the pages AI is reading but not citing yet. Put them at the top of your refresh list.

- Check the Sourcing Trend chart under Citations. Note which third-party platforms AI is pulling from most in your category. Plan one piece of off-site content there each month.

- Once a month, open Search and Insights. The traffic quality comparison between AI referrals and Google organic is the number to take to your leadership review.

AI Visibility is the only diagnostic parameter to let your buyers reach you

The teams getting AI visibility right today are not the ones with the most tools. They are the ones who treat it like a diagnostic system rather than a single number.

They look at branded versus non-branded clicks and ask whether they have a brand awareness problem or a category-association problem.

They look at mentions versus citations and ask whether they have an entity problem or a content problem. They examine platform asymmetry and ask why ChatGPT and Perplexity perceive them differently.

The diagnostic mindset is the difference between a visibility report and a visibility strategy.

By 2027, AI visibility will sit alongside organic traffic and pipeline as a standard board metric.

The brands that build the muscle now will spend the next two years compounding their advantage while their competitors are still arguing about which acronym to use.

Do you want more traffic?

.jpg)

.avif)