TL;DR

- LLM-powered search does not answer a prompt in one straight line.

It breaks the prompt into many smaller questions, then pulls evidence for each one. - A single B2B question, such as "best CRM for a mid-market SaaS team," triggers checks on pricing, integrations, security, migration effort, ROI, proof, and vendor risk.

Those checks are the fan-out queries. - SEO changes because the system is not looking for a single perfect page for a single keyword. The system is assembling a complete answer from the best passages it can retrieve across many pages.

- Visibility now comes from owning the branches that decide the deal.

Owning a branch means having a section that answers one specific buyer concern with proof and clarity. - Generic content gets blended into the crowd. Proprietary proof, such as caselets, benchmarks, and frameworks, is surfaced and cited.

B2B search behaviour has fundamentally changed because large language models do not retrieve a single ranked page to answer a query. They break a complex prompt into multiple structured sub-investigations, validate each independently, and then create a unified response.

When you ask a question, the LLM model checks feasibility, risk, cost structure, integration depth, implementation complexity, and vendor credibility before presenting an answer.

This means visibility no longer depends on ranking for a keyword. It depends on whether your content resolves one or more high-impact decision branches with clarity and proof.

What Are Fan Out Queries in LLM-Driven Search?

A fan-out query is the system-generated expansion of a single user prompt into multiple supporting searches that reduce uncertainty before an answer is formed.

For example, if a marketer asks, “What is the best AI SEO platform for a fintech company expanding in India?”, the system does not simply compare feature lists.

It expands the question to include regulatory suitability, multilingual capabilities, integration with existing CRM systems, documented performance results, pricing scalability, and vendor stability.

Each of these expansions becomes a distinct sourcing task.

The system behaves less like a search engine and more like a research analyst who is conducting structured due diligence before making a recommendation.

In consumer search, the branching may stay shallow because the financial and operational stakes are limited. In B2B search, the branching widens because wrong decisions carry long-term cost and reputational risk.

Fan-out architecture, therefore, transforms search from information retrieval into risk-weighted evaluation.

How LLMs Break Down Complex B2B Prompts?

The decomposition process begins with intent analysis. The system identifies the objective, constraints, industry context, and implied success criteria embedded in the prompt.

For example, you search for “ERP systems suitable for manufacturing firms with under 500 employees and multi-country operations.”

This single query contains operational scale, industry specificity, geographic compliance, and budget sensitivity.

The system separates these dimensions into 4 different evaluation tracks -

- One evaluation track assesses whether the vendor truly fits manufacturing-specific workflows and operational realities.

- A separate track examines localisation requirements, including regional tax structures and regulatory compliance obligations.

- Integration depth with existing supply chain, inventory, and production systems is evaluated as a distinct operational concern.

- Customer case studies and documented results from companies of similar size and complexity are analysed to validate real-world performance.

Retrieval runs across these tracks in parallel. Instead of retrieving one high-ranking article, the system extracts passages that answer each track precisely.

The synthesis stage then reconciles the findings into a coherent response that appears singular to the user.

From an SEO standpoint, this means your authority is judged within micro contexts.

You are not competing for “ERP systems” broadly. You are competing inside manufacturing-specific, mid-market, multi-country operational constraints.

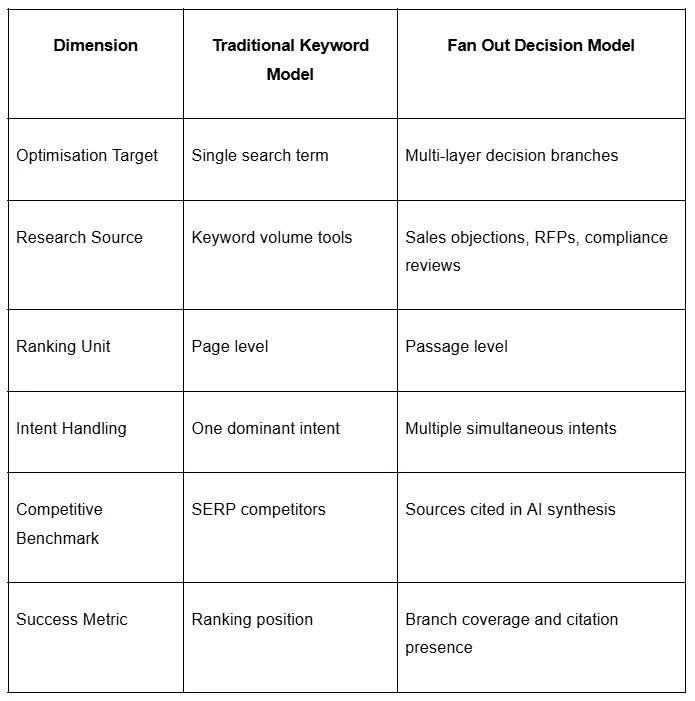

Comparing the Traditional Keyword Model & Fan Out Decision Model

Traditional SEO optimises around explicit phrases with measurable volume. Fan out SEO optimises around implicit decision logic that rarely appears in keyword tools.

The difference becomes clear when comparing both approaches -

For example, the keyword “best CRM software” may have high search volume. However, a real prompt from a CFO may include constraints such as total cost under a defined threshold, integration with existing ERP, GDPR compliance, and documented onboarding timelines.

Most of these constraints never appear in keyword databases. Query decomposition exposes hidden demand layers that reflect real buying pressure.

The decision surface comprises all conditions that must be met before approval. Ranking for the headline term does not guarantee surface ownership.

How to Measure and Improve Your Fan Out SEO Performance?

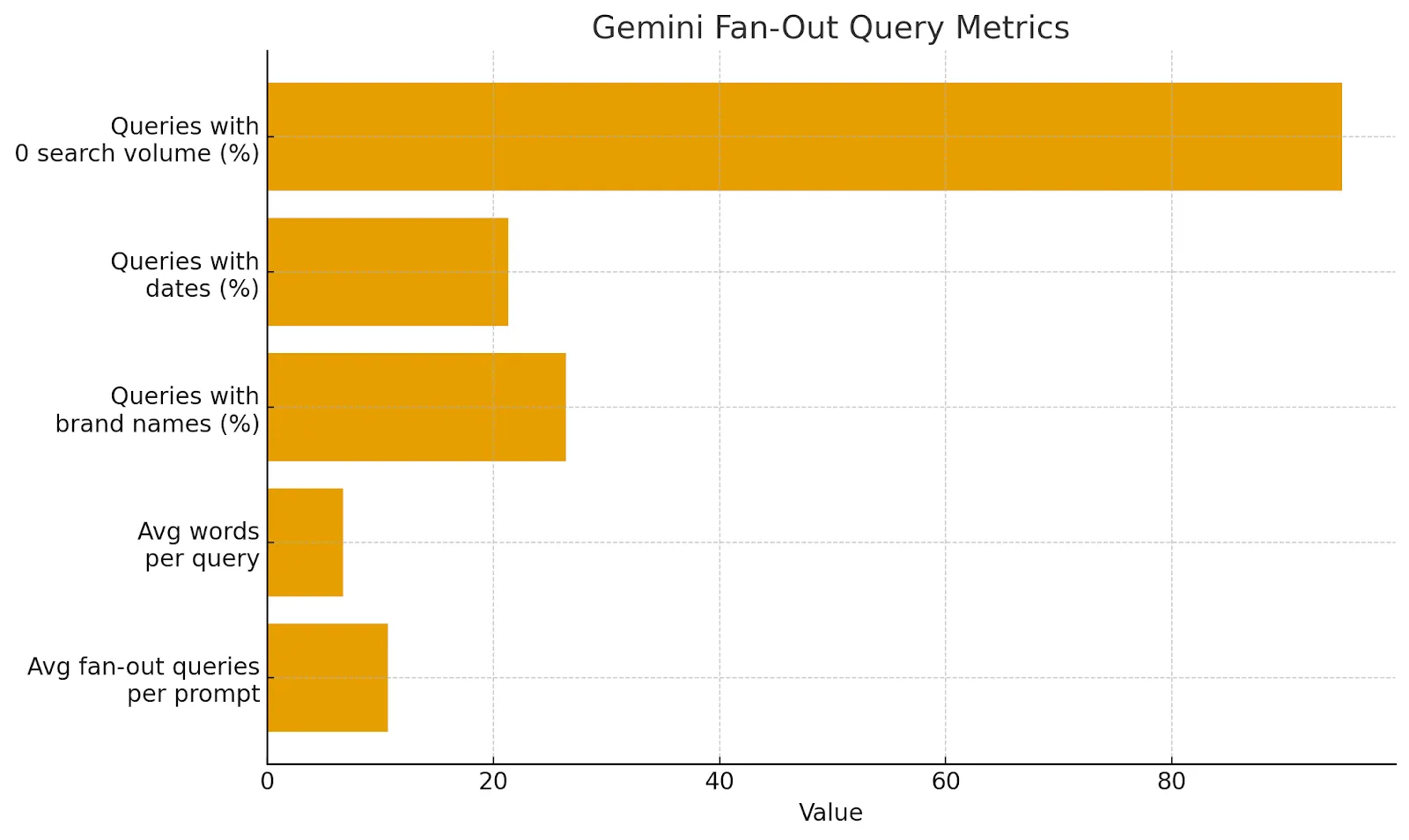

To manage your fan-out optimisation effectively, you need to track metrics that reflect how AI systems operate. Below are insights from recent research that you should monitor:

Number of fan-out queries per prompt, Gemini 3 averages 10.7 sub-queries per prompt, while earlier versions averaged around 6. The higher the number, the more nuanced the AI’s understanding of intent.

- Length of sub-queries: Fan-outs average 6.7 words, reflecting long-tail specificity. This is a signal that the AI is probing for fine-grained details.

- Brand inclusion: 26.4% of fan-out queries contain a brand name. Getting your brand mentioned in these queries increases your chances of citation.

- Date inclusion: 21.3% of fan-out queries include a year. Publish and update dates matter. Keep your content fresh.

- Search volume: 95% of fan-out queries have zero search volume. Don’t rely solely on keyword tools; instead, focus on intent and context.

Key fan-out query metrics from our analysis of Gemini’s retrieval behaviour. (source: Seer Interactive’s Gemini Fan-Out Research Dataset

How to Structure Your Pages for LLM Retrieval?

Large language models extract specific passages that match sub-queries with high semantic precision.

If a section heading reads “Why Choose Our Platform,” the retrieval signal is weak because the intent is ambiguous.

If the heading reads “How Our Platform Supports SOC 2 and GDPR Compliance,” the retrieval signal aligns directly with a compliance branch.

Clarity at the heading level increases the likelihood of extraction.

Each section should begin with a direct conclusion that answers a specific decision question. Supporting evidence should be presented in a structured format.

For example, a section on integration feasibility should begin by explicitly naming supported systems, then explain the integration architecture, data flow, and security, and the deployment timeline.

Tables strengthen comparative branches by presenting structured contrasts without narrative interpretation.

Short, precise paragraphs reduce semantic drift and improve extractability.

Structure is therefore not aesthetic. It directly influences whether your content is selected during synthesis.

How to Check What ChatGPT Searches Behind Your Query?

If your competitors keep appearing in AI answers while your brand does not, the issue is not rankings. The issue is branch coverage.

Large language models break your prompt into multiple sub-queries before generating a response. Those subqueries determine which brands get included.

Enter a commercial query in ChatGPT that matters to your business. After the answer loads, right-click and open Inspect. Go to the Network tab, refresh the page, open the main response request, and search for the word queries inside the response payload.

You will see the exact sub-queries generated from your original prompt.

Your competitors are not winning because they wrote better headlines. They are winning because they satisfy more of the system’s internal decision branches.

Repeat this process across multiple high-value prompts in your category. Patterns will emerge, and you can track your search queries in ChatGPT in this manner.

Watch this short video for a step-by-step walkthrough of how to inspect ChatGPT and uncover the exact sub-queries driving AI search decisions: https://www.instagram.com/reel/DUnZurnCrVS/?igsh=NjkxY28wanYzNzNk

Measuring Visibility in an LLM-Driven Search Ecosystem

Traditional ranking metrics reflect visibility in list-based search environments.

Fan-out environments require branch-level visibility tracking.

A brand may rank lower in traditional SERPs yet consistently appear in AI-generated summaries for high-value prompts.

Measurement should include presence inside AI answers, citation frequency for commercial queries, and brand mention consistency across competitive comparisons.

In LLM-powered search environments, authority compounds when every critical branch of a buying decision is addressed with precision and proof.

B2B brands that design for decision architecture will not simply rank. They will be referenced, synthesised, and trusted.

Do you want more traffic?